The software development lifecycle as we’ve known it for the past two decades is being replaced, not incrementally, not gradually, but wholesale. In its place, a fundamentally new model is emerging: the Agentic SDLC.

If you lead security at any organization that ships software, you already know this is not just AI-assisted development but a structural transformation of how software gets built, one is fundamentally reshaping who writes the code, how it reaches production, and what a security review actually needs to cover. All at a pace where developers can now push hundreds of pull requests faster than your security team can review one.

Every transformation of this scale, from open source to cloud to DevOps, has been a defining moment for the security leaders who lived through it. The ones who called the shift early and redesigned their programs around it became the architects of how their companies built security for the next decade. The ones who waited spent that decade catching up. This transformation is no different, except that it is moving faster than any of their predecessors. Securing the Agentic SDLC is not just another item for the roadmap. It is a fundamental rethinking of security for the entire ecosystem, which will define our craft and success for the decade ahead.

What is the Agentic SDLC?

The traditional SDLC followed a familiar chain: requirements → design → implementation → testing → deployment → monitoring. Humans drove every stage. Code was the artifact of human thought, written line by line, reviewed by peers, and shipped through well-understood CI/CD pipelines.

The Agentic SDLC breaks that chain.

In this new model, engineering focus shifts to three core activities:

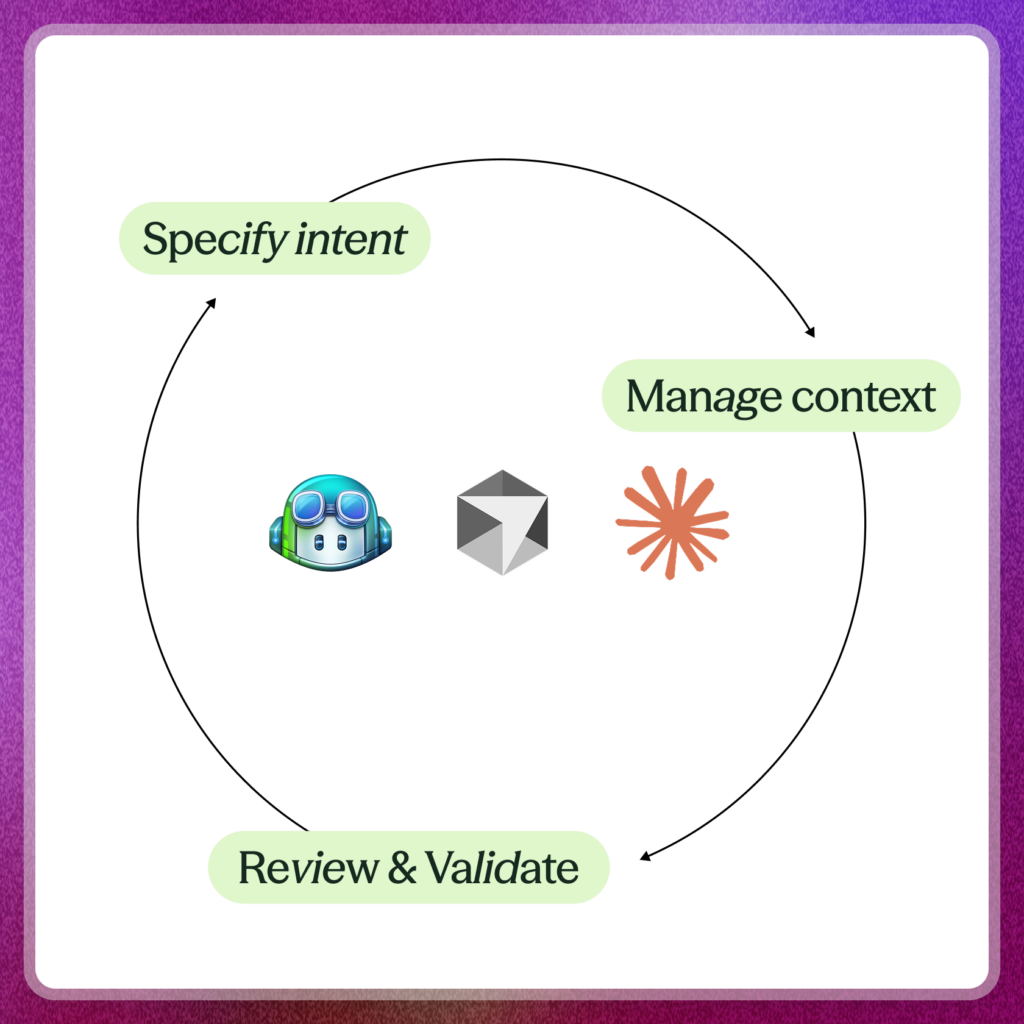

1. Specifying intent, design, and architecture.

The most critical work in software development is no longer writing code, it’s defining what to build and why. Engineers and product managers spend more time crafting specifications, defining system architecture, and articulating design intent than they spend on implementation. As Anthropic’s report notes, “human expertise focuses on defining the problems worth solving while AI handles the tactical work of implementation.”

2. Manage context and tools.

Developers now curate the context that agents operate within, and that curation is a craft in its own right. It starts with choosing the right model or agent for each task, defining how it operates, and selecting the tools it gets access to. It extends to configuring MCP servers, building Claude Skills, writing custom system prompts, and maintaining the knowledge infrastructure that lets agents make good decisions. The quality of the output is directly proportional to the quality of the context provided.

3. Reviewing and validating massive volumes of agent output.

When agents can work for hours or days autonomously, generating entire feature sets or even complete applications, the human role shifts from writing code to reviewing it, evaluating architectural choices, validating design decisions, and ensuring the system as a whole solves the right problems. Anthropic’s research reveals that while engineers use AI in roughly 60% of their work, they can only “fully delegate” 0-20% of tasks. The rest requires active collaboration, supervision, and judgment.

Code itself is becoming a commoditized implementation detail. The real craft, the work that determines whether software is good, secure, and correct, is moving upstream to intent, design, and context.

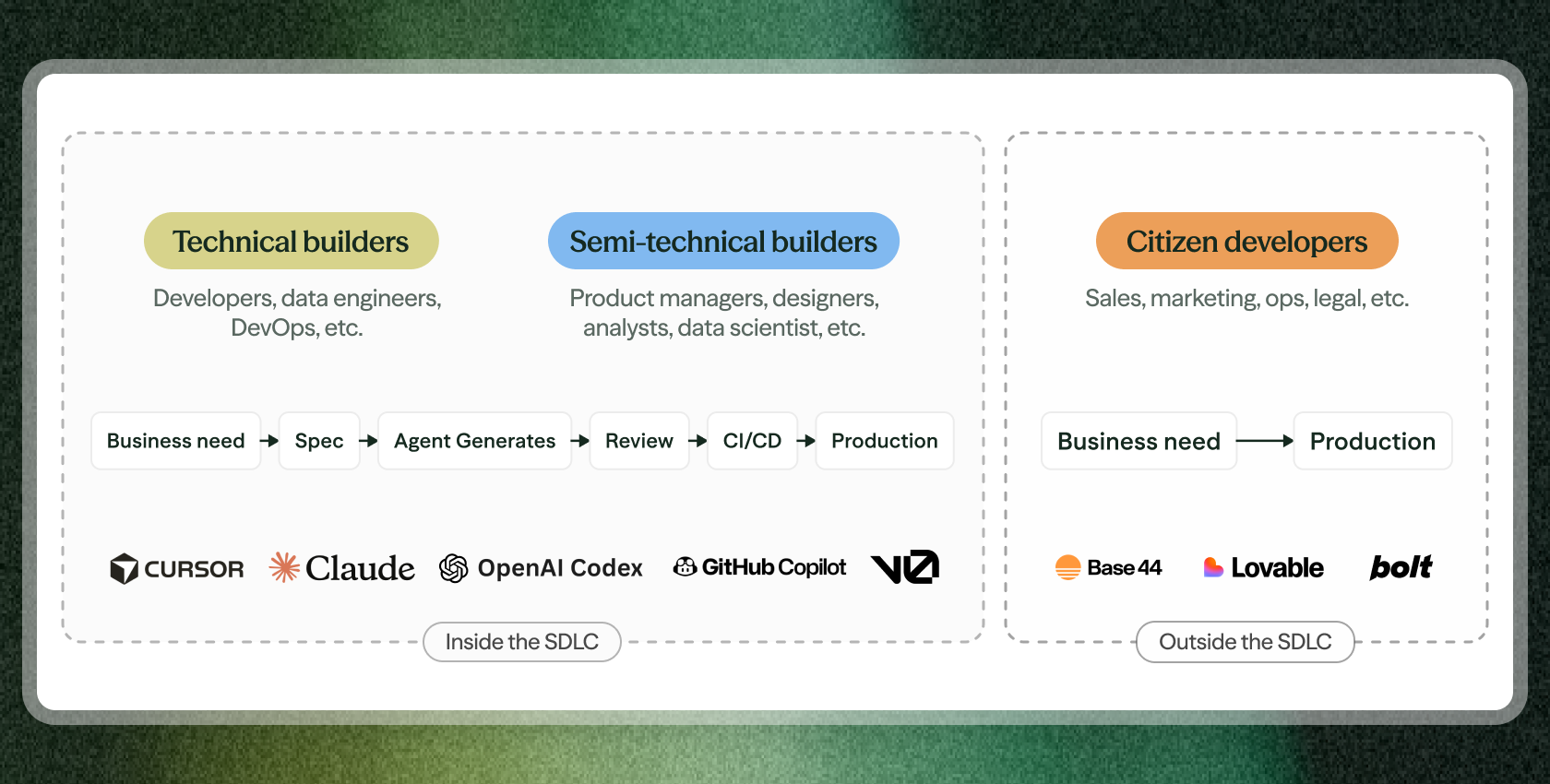

Three audiences of agentic builders

The Agentic SDLC isn’t producing a single, uniform type of builder. It’s producing three distinct audiences, each with fundamentally different relationships to code, to tooling, and to the enterprise development pipeline. Understanding this breakdown is essential, because each audience creates a different category of security challenge.

Audience 1: Technical builders

These are the professional software engineers who have evolved from writing code to orchestrating agents that write it for them. They are the core of the Agentic SDLC.

In Anthropic’s framing, the engineer’s role has shifted from “implementer to orchestrator.” In 2026, the value of an engineer’s contribution increasingly lies in system architecture design, agent coordination, quality evaluation, and strategic problem decomposition. They shepherd multiple features through development simultaneously, applying their judgment across a broader scope than individual implementation ever allowed.

These builders work in tools like Claude Code, Cursor, and IDE-integrated agents. They express intent, provide context via MCP servers and Claude Skills, review agent-generated output, and ship through standard CI/CD pipelines. Some prompt agents conversationally; others collaborate with PMs and architects on detailed design docs that guide agent behavior.

A growing subset of this audience is going further, adopting spec-driven development, where structured specifications are committed to version control and serve as the primary input to autonomous code generation pipelines. GitHub’s Spec Kit has reached 89.2k stars. The Kiro IDE team cut feature builds from two weeks to two days. AWS engineering teams completed an 18-month rearchitecture with six people in 76 days. Spec-driven development is powerful, but it remains an optional adventure at this point, a maturity level that some organizations pursue, not a path most companies will necessarily follow. Whether an engineer prompts an agent conversationally or feeds it a formal spec, the common thread is the same: they are technical builders who no longer write code manually, and whose primary craft has shifted to intent, design, and architecture.

The security challenge here is about scale and context. These builders are 10x more productive, shipping at volumes that overwhelm traditional review processes. And the agents they orchestrate make design decisions without institutional memory, without awareness of trust boundaries, past security incidents, or adjacent system dependencies. The output is technically excellent but contextually blind.

Audience 2: Semi-technical builders

A second, rapidly growing segment of builders are those who aren’t professional engineers but are now producing production code: product managers, designers, data analysts, junior developers. They use natural language, “vibe coding,” the term coined by Andrej Karpathy, to prompt AI agents and generate working software, which then enters the standard SDLC through pull requests.

The pattern looks like this: the builder describes what they want in plain English. Claude or Cursor generates the code. A developer reviews the PR. Tests run, CI/CD deploys, and the output reaches production. It’s an enterprise-guardrailed version of vibe coding, the output enters the standard pipeline, but the person who initiated it may have limited understanding of the architectural and security implications of what they’re shipping.

The code itself is often syntactically clean, AI models are sophisticated enough to avoid the tactical issues that scanners flag. But the design decisions embedded in that code are made by an AI agent operating without organizational context, without understanding of the broader system architecture. Palo Alto’s Unit 42 has documented real-world breaches caused by this exact pattern: a sales lead app compromised because the agent skipped authentication and rate limiting, an AI agent deleting an entire production database despite explicit instructions, authentication bypasses from exposed public IDs. Their root cause finding is that AI models “prioritize function over security” and suffer from “critical context blindness.” An independent scan by Escape.tech of 5,600 vibe-coded production applications confirmed the scale of the problem: over 2,000 vulnerabilities, 400+ exposed secrets, and 175 instances of exposed PII, including medical records and authentication credentials. And these are typically design-level flaws, not syntax errors.

Anthropic’s own research confirms this is becoming mainstream: the barrier separating “people who code” from “people who don’t” is becoming permeable. As their report notes, “coding capabilities democratize beyond engineering,” with non-traditional developers building in fields like cybersecurity, operations, design, and data science.

The security challenge here is about visibility and literacy. The people generating code may not understand what a trust boundary is. The developers reviewing their PRs may be overwhelmed by volume. And the design decisions being made are invisible to any tool that only looks at code.

Audience 3: Citizen developers

The third audience is often the least visible, and introduces a unique set of risks. Non-technical builders across sales, marketing, legal, and operations are using Claude, Lovable, and other no-code/low-code tools to build internal applications, automations, and workflows that never enter the formal SDLC at all.

Anthropic’s trends report confirms this is accelerating: “Non-technical teams across sales, marketing, legal, and operations gain the ability to automate workflows and build tools with little or no engineering intervention.” Zapier has achieved 89% AI adoption across its entire organization with 800+ AI agents deployed internally. Anthropic’s own legal team reduced marketing review turnaround from two to three days down to 24 hours by building Claude-powered workflows. Domain experts implement solutions directly, removing the bottleneck of filing a ticket and waiting for engineering.

Citizen-developed applications break the boundary between corporate security and product security. They operate entirely outside the purview of traditional product security teams. There is no PR to review. No CI/CD pipeline to gate. No design doc to analyze. These applications go directly from business need to production usage, with whatever security posture the AI agent happened to bake in by default. They often handle real customer data, connect to real internal systems, and operate as de facto production software, without ever being seen by a security engineer.

A security mandate that can’t be met

Product security teams were already struggling to keep up with human developers long before any of this started. Backlogs were growing, reviews were slipping, and threat models were going stale faster than teams could refresh them. Now add agentic developers shipping at 10x volume, vibe-coded pull requests from semi-technical builders who don’t know what a trust boundary is, and citizen-built shadow IT that never enters the pipeline at all. This rapidly increases the threat surface, at a pace no human process was designed to match.

Manual review processes, design reviews, architecture assessments, and threat models all remain high-fidelity activities that catch real flaws. But they are fundamentally human-speed processes facing machine-speed output from three different directions.

This is the core tension of the Agentic SDLC: security teams are under more pressure than ever to secure these applications, while also being expected to enable the greatest productivity boost of our generation. Block the agentic pipeline and you block the business. Let it run unchecked and you accept unquantifiable risk.

What security for the Agentic SDLC requires

We founded Clover because the industry requires a fundamentally new approach to software security, one that works across all builder audiences, at machine speed, with continuous understanding of design, architecture, and intent.

Context is the new foundation. In the traditional SDLC, humans carried context implicitly, why this service exists, what data it handles, what the trust model is. In the Agentic SDLC, that context evaporates. AI agents operate without institutional memory, without awareness of adjacent systems, without understanding of past security decisions. Security that doesn’t start with context, deep, architectural, continuously-updated context, is security that can’t function at an agentic scale.

Clover builds a live context engine that fuses product context (what we’re building and why), technical context (how it’s built, what talks to what, where sensitive data flows), and security context (what could go wrong, what’s been reviewed, what assumptions remain unvalidated). It does this by plugging into the tools builders already use: Confluence, Jira, GitHub, Claude, Slack, and continuously analyzing the evolving state of every product and application.

Building an effective context engine is the difference between security agents that spit out AI slop and an effective agentic infrastructure that actually works at scale. With the right context in place, this engine can secure all three builder audiences:

- Technical builders get fast, high-signal feedback they can iterate on, without the noise of traditional scanners.

- Semi-technical builders produce higher-quality code with simpler inputs, as architectural context is enforced downstream.

- Citizen developers can move quickly while staying within company guardrails, even outside the formal SDLC.

The security shift isn’t from scanners to better scanners. It’s from scanning code to understanding context. When code is commoditized, risk moves upstream, into design decisions, architecture, and implicit assumptions agents make without awareness. AI-generated code will often pass scanners while still introducing systemic flaws. The question is no longer simply “is this code vulnerable?” but “did we make the right decisions before the code existed?” Clover operates at that layer, analyzing intent, architecture, and trust boundaries before they become implementation.

Facing the security challenge (and opportunity) of the decade

When your builders adopt something faster than you can govern it, that’s a signal that your security model’s status quo is shifting beneath you. GitHub arrived in enterprises through backchannels years before security teams were ready to bless it. ChatGPT showed up on employee laptops months before most CISOs had an AI policy. Every seismic shift in how software gets built has followed the same pattern: the builders move first, the organization catches up later, and the security teams who treated the shift as a threat to contain ended up running behind the ones who treated it as a shift to secure. The Agentic SDLC is the same pattern, at a larger scale, moving faster.

And the pattern is already playing out. Inside the organizations moving fastest, the Agentic SDLC is not next year’s planning exercise, it’s today’s production reality. Engineers are orchestrating agents. Product managers and designers are shipping vibe-coded features. Sales, legal, and operations are building applications that never touch a pull request. The builders are already speaking loudly, in three different voices, and the security model sitting on your desk wasn’t designed to hear any of them.

Every security leader reading this already has more on their plate than the hours in a day allow. Scanners to triage, vulnerabilities to patch, pen tests to run, compliance clocks ticking. All of it matters. All of it is real. But none of it will define the next decade of product security. This will. The leaders who recognize that first, the ones who stop treating agentic development as a threat to contain and start treating it as the terrain they now operate on, are the ones who will still be leading when the dust settles. The rest will be explaining to their boards why the pipeline got away from them.

The craft of software has moved from code to intent. The craft of security has to follow. Not eventually. Now.

We’ve been building Clover for exactly this future, and we’ll have a lot more to share soon. If you’d like an early look at what we believe security for the agentic era has to be, get in touch.